Advancing machine learning through probabilistic methods at the frontiers of computing and statistics

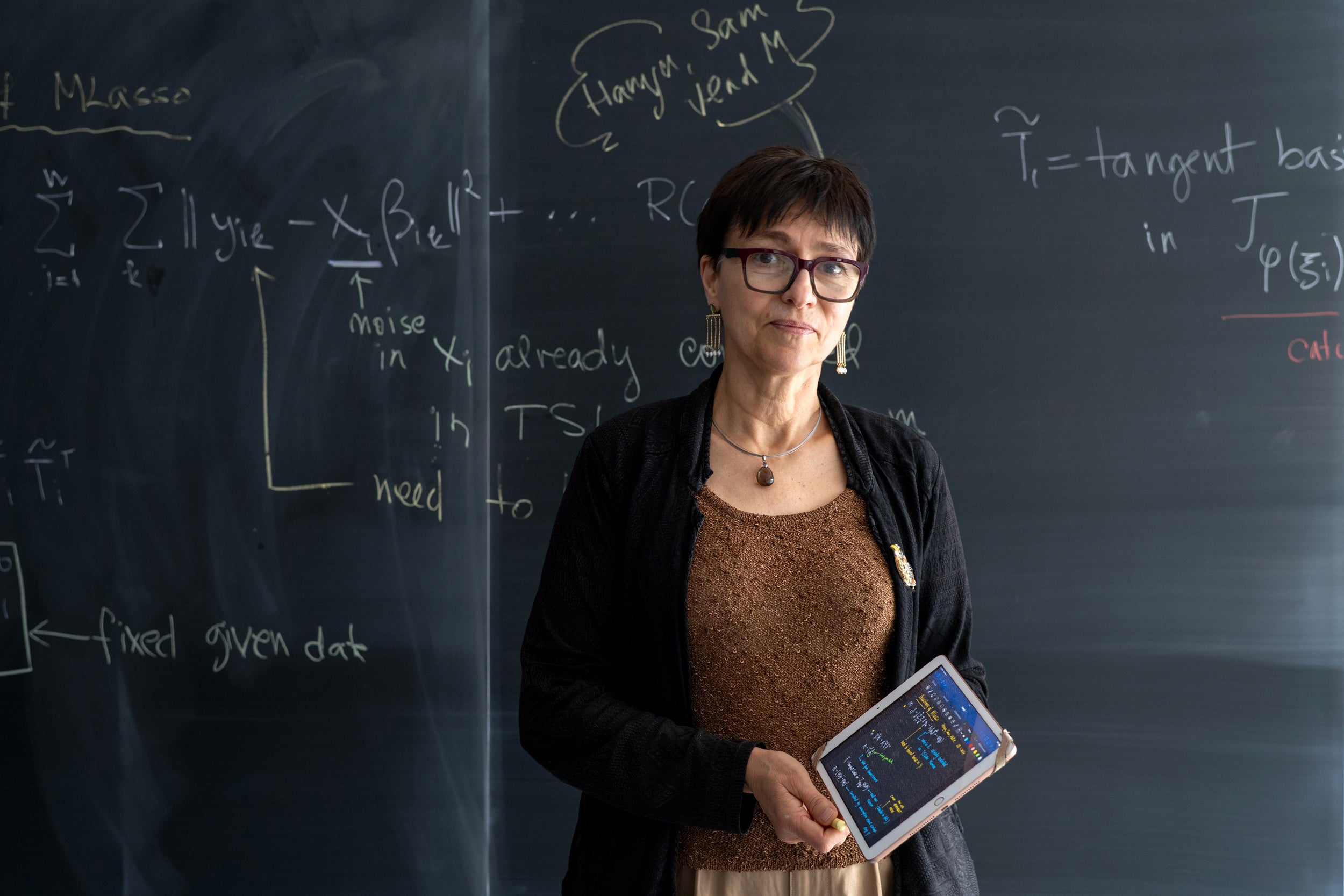

Marina Meila has been named a Canada CIFAR AI Chair, recognizing her expertise in advancing the theoretical foundations of interpretable and explainable machine learning.

She joined the Cheriton School of Computer Science as a Full Professor in July 2025. Previously, she was a Professor in the Department of Statistics at the University of Washington and a Senior Fellow of the University of Washington’s eScience Institute.

Professor Meila’s long-term research interest is in statistical learning, with a focus on discovery of geometric and combinatorial structure in data. Her work develops efficient algorithms, along with theoretical guarantees and validation methods for unsupervised learning under minimal assumptions about the data-generating process. She has collaborated with researchers in applied inverse problems, materials science, and theoretical chemistry.

She has an MS in Electrical Engineering from the Polytechnic Institute of Bucharest, and a PhD in Computer Science and Electrical Engineering from MIT.

What follows is a Q&A in which Professor Meila discusses her research journey, current projects, and future directions as a Canada CIFAR AI Chair.

Tell us a bit about your research and its history.

I grew up in Bucharest, Romania, where I completed a master’s degree in electrical engineering, specializing in automatic control. Afterwards, I worked in a microchip factory for a short time, before joining a computer-aided design group at a research institute for computer engineering in Bucharest. Although these jobs were brief, I carry many memories, friendships and professional relationships from that time. It was there that I was introduced to neural networks, a turning point, as I have been working in machine learning ever since.

It was around this time that the communist government in Romania collapsed. Suddenly, it became possible to pursue a PhD and academic positions, even abroad. With this new-found freedom, I applied to universities in the United States and eventually went to MIT.

Becoming a student at MIT was a cultural shock in a good way. In Romania at the time, the only way to communicate with the outside world was by post. If I wanted to read a journal paper, I would write to the author, and half the time they had already moved to another institution. But sometimes I’d get a reply and a paper or two. From those, I’d track down more references and addresses for correspondence, write again, and gradually build up papers to read. In contrast, at MIT I had immediate access to papers and other researchers working in the field.

Early in my PhD, I realized I wasn’t drawn to machine learning for the neural networks. I was drawn to it for the learning. That meant understanding statistics, the science of separating the signal from the noise, one of the most important aspects of machine learning. I taught myself statistics, and I must have succeeded because after finishing my PhD I was hired as an Assistant Professor of Statistics at the University of Washington in Seattle. But my research has always been at the intersection of statistics and computer science. The graduate students I advise come from statistics, engineering, and computer science.

How did your research evolve?

You know how there are reluctant leaders? I’m a reluctant researcher in unsupervised learning. Let me explain, taking as an example the simplest unsupervised learning problem, clustering.

Clustering is about finding groups in data. You might have data on people at a university. One cluster could correspond to students, another to faculty, and a third to staff. But before you know those categories, you just notice that the data separates into three groups. That’s clustering.

In grad school, I believed that clustering and unsupervised learning are too “heuristic” for my liking, that there is little to understand profoundly and rigorously about these problems and topics. But then, about the time I moved to Seattle, I became aware, through a friend, of a fascinating mathematical and combinatorial connection between my current work and clustering. We wrote a paper that launched a large part of my research for the next ten years. In fact, the field itself — spectral clustering — was revived through that collaboration, through what my colleague had done before and my transformation of it. On the tails of this discovery I also discovered a new way to compare clustering that no one had thought of before, grounded in Information Theory.

By now I was fully convinced not only that clustering can be understood rigorously, but also that I was going to help build its foundations.

Coming closer to the present, because I had spent so much time thinking of clustering, I realized that one can do something that people thought was impossible. In practice, it’s not hard to find clusters in data, but what is difficult — and was believed as impossible — is knowing whether you have found the right clusters.

Not all data has clusters, and you should not assume it does. But on the other hand, if the data has clusters you want assurance that you have found the right ones. I realized that you can, in fact, prove this second point. In other words, if the data has clusters, we can run an algorithm that determines whether the clusters found are correct, in a very strong sense, as one would want in practice.

The topic of rigorous guarantees for discovering structure in data, be it clusters or dimension reduction or something else, has remained one of the driving themes in my research.

After clustering, I explored an area called nonlinear dimension reduction. The more technical term is manifold learning. Manifold learning algorithms can describe a cloud of very high dimensional points with just two or three coordinates, thus they create a “map” of the data called a manifold. This is due to two happy occurrences, one being discovering manifold learning in the first place, the other, rediscovering it. The re-discovery happened when I co-organized a program on the convergence of machine learning and many-particle systems at the Institute for Pure and Applied Mathematics in Los Angeles. Around that time I was almost ready to give up on manifold learning because I felt nothing interesting was happening in the area. But it turned out that the chemists and physicists in this program were applying manifold learning to solve their problems.

I began collaborating with them, learning about their research problems. This has been a productive and rewarding direction. It breathed new life into my research on foundational problems and pushed me raise the bar for my work. I realized that what I wanted to do is solve problems of practical relevance using the theoretical methods at my disposal, and other new ones that I was to invent. In other words, using theory I know, not necessarily to just produce more theory, but to produce more theory that can be directly applied.

As a CIFAR AI Chair what are your plans?

Being nominated for a CIFAR Chair is an opportunity to consider how to expand my research. I decided to tackle a problem that had puzzled me personally, but which is also a priority now that machine learning and AI are expanding so rapidly into everyday life.

It concerns the nature of explanation in machine learning. Everyone demands explainable machine learning results, interpretable machine learning and AI algorithms. But there’s a paradox here. Often the explanation or interpretation algorithm is just another, simpler and more limited machine learning algorithm. Other times, the explanation is more of a hint of why the AI or machine learning prediction should be correct. And other times, the explanations are patterns that must be “explained” by the algorithm user.

So, to me, the first question is: Why do people need explanations in the first place? What role does explanation fulfill in the interaction between computers and human specialists? Is an explanation like a proof? Can it be something other than a proof? By understanding the need for explanation, I can then proceed to describe in quantitative terms what makes a good explanation.

My goal is to make sense of this, to develop a framework for making the nature of explanation quantitative instead of qualitative, and to formulate mathematically what a good explanation is, and how to find it algorithmically. I have already started. I expect that multiple paradigms for explanations will eventually be developed, just like multiple paradigms exist now for causality, or for clustering. I believed that they will spur development in this important problem.

What is your advice to students?

One of the greatest joys of working with students is seeing them take ownership of a research problem and run away with it. Most of the work I am proud of is in close collaboration with my students.

My advice is more about how students should prepare than what they should know. Preparation means building solid foundations in math, probability, algorithms and computer science. You should take these subjects seriously as though your life depended on them. Be curious, and never stop learning and developing your skills. Every research problem requires you to learn something new.

You should be proud of your research but listen carefully to people who question it. If they don’t get it, ask yourself whether you could explain it better. If you solved problem A but someone thinks you solved B, consider whether you could also solve B, and whether that would be a good problem to work on.

Because my research combines mathematics, statistics and computer science as well as practical sciences — physics and chemistry — one good thing is that you can enter this field from many directions, as long as you’re willing to learn about the others. It’s a big and dynamic field.

Do you see collaborative research opportunities at Waterloo?

I’m a statistician and an applied mathematician as much as I’m a computer scientist. At Waterloo, mathematics, optimization, statistics and computer science — everything I do — are in the same faculty. This is a unique environment and a superb opportunity. I want to make the best of it.

And, let’s not forget, I work with data. I can collaborate with anyone who has data.

Who has inspired you?

I am inspired by people who persist in doing the right thing in difficult and adverse conditions, and by people who never stop being curious, who are excited about learning and the possibilities stemming from science and education.

I was incredibly fortunate to have such people in my mother, my grandfather, and my PhD supervisor. My mother was the first scientist in my life, and grew along with my own development into a lifelong friend and unsparing critic. My grandfather pursued his passion for the law from his youth as a penniless young man until his 90s. He also transmitted to me his admiration for English culture and was my first teacher of English. My PhD supervisor has inspired perhaps more people than any other machine learning researcher, but for me he also represents a humanist as well as a scientist — a complete human being.

What do you do in your spare time?

I like the outdoors, travel and taking photos, a good book, a good laugh.