Professor Gautam Kamath has won the 2026 Presburger Award. This prestigious honour recognizes his exceptional contributions to theoretical computer science, notably his pioneering work on computationally efficient algorithms for fundamental estimation tasks under robustness constraints.

Conferred annually since 2010 by the European Association for Theoretical Computer Science, the Presburger Award recognizes a young researcher for outstanding contributions to theoretical computer science, as documented by a paper or series of papers. The award is named in honour of Mojżesz Presburger, whose groundbreaking research on decidability of the theory of addition laid the foundation for what is now known as Presburger arithmetic.

“Congratulations to Gautam on receiving this year’s Presburger Award,” said Raouf Boutaba, University Professor and Director of the Cheriton School of Computer Science. “This prestigious honour reflects the depth and growing influence of his work. The award also adds to his growing list of professional accolades that includes an Ontario Early Researcher Award in 2025 and the Faculty of Mathematics Golden Jubilee Research Excellence Award in 2023, along with the 2024 Caspar Bowden PET Award and a recent best paper award at ICML.”

Gautam Kamath is an Assistant Professor at the Cheriton School of Computer Science, a Canada CIFAR AI Chair at the Vector Institute, and a member of Waterloo’s Cybersecurity and Privacy Institute. An expert in algorithms, statistics and machine learning, his research focuses on modern data analysis under constraints such as differential privacy and robustness.

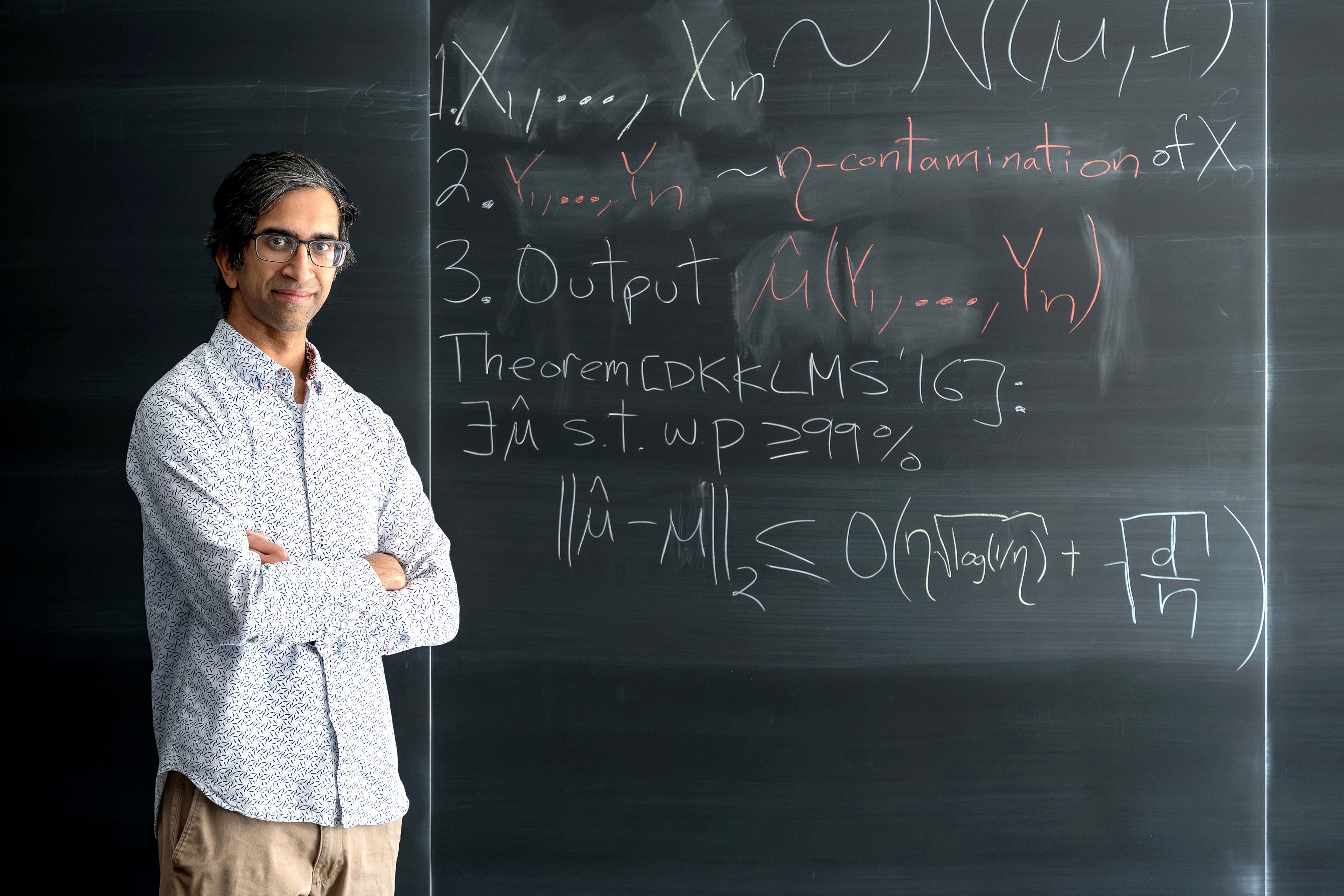

As of April 2026, his publications have been cited more than 6,800 times, with an h-index of 41, according to Google Scholar. His most highly cited paper, on efficient robust estimation, whose key ideas are illustrated on the blackboard, helped define a new area of study in high-dimensional statistics.

About Professor Kamath’s research

Professor Kamath has pioneered computationally efficient algorithms for fundamental estimation problems under robustness constraints. His seminal 2016 paper helped launch an entire research area, with a rapidly expanding frontier spanning topics such as list-decodable learning and differential privacy. Over the past decade, he has been a leading contributor to many of these developments.

Professor Kamath’s research begins with mean estimation, the most fundamental statistical question: Given n independent and identically distributed samples from a d-dimensional Gaussian distribution, how can the mean be estimated? The simple, efficient and optimal statistic is the empirical mean.

The question, however, becomes much more interesting when a portion of the data is corrupted (i.e., an η-fraction of the dataset), whether by model misspecification or a malicious adversary. The empirical mean can now be arbitrarily bad. In 2016, Professor Kamath and his collaborators resolved this classical problem by applying an algorithmic lens. They gave the first polynomial-time algorithm that achieves a dimension-independent 𝑂(η) excess error. Their main algorithm is an elegant application of the spectral method. By inspecting the top eigenvalue of the empirical covariance matrix, they can identify and prune outliers by inspecting the corresponding eigenvector direction, or certify that the empirical mean of the remaining points is accurate. He and his collaborators also give algorithms for Gaussians with unknown covariances and a host of other settings.

Their paper, Robust Estimators in High-Dimensions Without the Computational Intractability, was presented at FOCS 2016, the 57th Annual Symposium on Foundations of Computer Science. Highly acclaimed by the community, the paper was invited to several prestigious venues, among them the SICOMP Special Issue for FOCS 2016, Highlights of Algorithms 2017, and Communications of the ACM Research Highlights. As of April 2026, it has received more than 660 citations and remains the most cited paper from FOCS 2016 and onward.

Professor Kamath and his collaborators have produced several key works in this area subsequently, including algorithms operating under much weaker distributional assumptions at ICML 2017, the 34th International Conference on Machine Learning; improved algorithms for Gaussians at SODA 2018, the 29th ACM-SIAM Symposium on Discrete Algorithms; and algorithms for protecting machine learning models from data poisoning attacks at ICML 2019, 36th International Conference on Machine Learning. Beyond these contributions, his work has helped spark widespread interest across the algorithms community.

Meanwhile, Professor Kamath has made significant contributions to differential privacy. These papers include Privately Learning High-Dimensional Distributions (COLT 2019), The Discrete Gaussian for Differential Privacy (NeurIPS 2020), Locally Private Hypothesis Selection (COLT 2020), and Differentially Private Algorithms for Learning Mixtures of Separated Gaussians (NeurIPS 2019).

Differential privacy can be viewed as a form of robustness, requiring that an algorithm’s output remain stable under small changes to its input. A key open problem was to design efficient optimal algorithms for mean estimation. In Efficient Mean Estimation with Pure Differential Privacy via a Sum-of-squares Exponential Mechanism (STOC 2022), Professor Kamath and his collaborators solved the open problem, again using the algorithmic lens he pioneered in 2016. This connection is not incidental. In subsequent work, Robustness Implies Privacy in Statistical Estimation (STOC 2023), Professor Kamath and his collaborators showed that any robust estimator can be converted to a private one, evidence of deep algorithmic connections that underpin the field.

Beyond his research contributions, Professor Kamath is committed to advancing and sharing these ideas within the broader community. He co-organized workshops on robust and private estimation at the Fields Institute in 2022 and at the Toyota Technological Institute at Chicago in 2024, and authored a survey article on the broader landscape of robustness in algorithmic statistics published in IEEE BITS: The Information Theory Magazine.